Download the PHP package charescape/llphant without Composer

On this page you can find all versions of the php package charescape/llphant. It is possible to download/install these versions without Composer. Possible dependencies are resolved automatically.

Informations about the package llphant

LLPhant - A comprehensive PHP Generative AI Framework

We designed this framework to be as simple as possible, while still providing you with the tools you need to build powerful apps. It is compatible with Symfony and Laravel.

We are working to expand the support of different LLMs. Right now, we are supporting OpenAI, Anthropic, Mistral, Ollama, and services compatible with the OpenAI API such as LocalAI. Ollama that can be used to run LLM locally such as Llama 2.

We want to thank few amazing projects that we use here or inspired us:

- the learnings from using LangChain and LLamaIndex

- the excellent work from the OpenAI PHP SDK.

We can find great external resource on LLPhant (ping us to add yours):

- 🇫🇷 Construire un RAG en PHP avec la doc de Symfony, LLPhant et OpenAI : Tutoriel Complet

- 🇫🇷 Retour d'expérience sur la création d'un agent autonome

- 🇬🇧 Exploring AI riding an LLPhant

Sponsor

LLPhant is sponsored by :

- AGO. Generative AI customer support solutions.

- Theodo a leading digital agency building web application with Generative AI.

Table of Contents

- Get Started

- Database

- Use Case

- Usage

- Chat

- Image

- Speech to text

- Tools

- Embeddings

- VectorStore and Search

- Question Answering

- AutoPHP

- Contributors

- Sponsor

Get Started

Requires PHP 8.1+

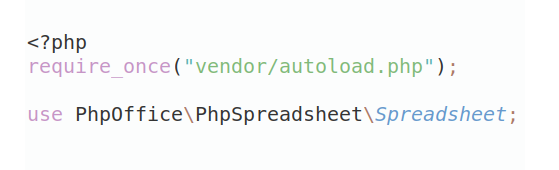

First, install LLPhant via the Composer package manager:

If you want to try the latest features of this library, you can use:

You may also want to check the requirements for OpenAI PHP SDK as it is the main client.

Use Case

There are plenty use cases for Generative AI and new ones are creating every day. Let's see the most common ones. Based on a survey from the MLOPS community and this survey from Mckinsey the most common use case of AI are the following:

- Create semantic search that can find relevant information in a lot of data. Example: Slite

- Create chatbots / augmented FAQ that use semantic search and text summarization to answer customer questions. Example: Quivr is using such similar technology.

- Create personalized content for your customers (product page, emails, messages,...). Example Carrefour.

- Create a text summarizer that can summarize a long text into a short one.

Not widely spread yet but with increasing adoption:

- Create personal shopper for augmented ecommerce experience. Example: Madeline

- Create AI agent to perform various task autonomously. Example: AutoGpt

- Create coding tool that can help you write or revie code. Example: Code Review GPT

If you want to discover more usage from the community, you can see here a list of GenAI Meetups. You can also see other use cases on Qdrant's website.

Usage

You can use OpenAI, Mistral, Ollama or Anthropic as LLM engines. Here you can find a list of supported features for each AI engine.

OpenAI

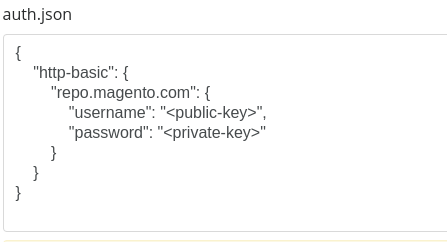

The most simple way to allow the call to OpenAI is to set the OPENAI_API_KEY environment variable.

You can also create an OpenAIConfig object and pass it to the constructor of the OpenAIChat or OpenAIEmbeddings.

Mistral

If you want to use Mistral, you can just specify the model to use using the OpenAIConfig object and pass it to the MistralAIChat.

Ollama

If you want to use Ollama, you can just specify the model to use using the OllamaConfig object and pass it to the OllamaChat.

Anthropic

To call Anthropic models you have to provide an API key . You can set the ANTHROPIC_API_KEY environment variable.

You also have to specify the model to use using the AnthropicConfig object and pass it to the AnthropicChat.

Creating a chat with no configuration will use a CLAUDE_3_HAIKU model.

OpenAI compatible APIs like LocalAI

The most simple way to allow the call to OpenAI is to set the OPENAI_API_KEY and OPENAI_BASE_URL environment variable.

You can also create an OpenAIConfig object and pass it to the constructor of the OpenAIChat or OpenAIEmbeddings.

Here you can find a docker compose file for running LocalAI on your machine for development purposes.

Chat

💡 This class can be used to generate content, to create a chatbot or to create a text summarizer.

You can use the OpenAIChat, MistralAIChat or OllamaChat to generate text or to create a chat.

We can use it to simply generate text from a prompt. This will ask directly an answer from the LLM.

If you want to display in your frontend a stream of text like in ChatGPT you can use the following method.

You can add instruction so the LLM will behave in a specific manner.

Images

Reading images

With OpenAI chat you can use images as input for your chat. For example:

Generating images

You can use the OpenAIImage to generate image.

We can use it to simply generate image from a prompt.

Speech to text

You can use OpenAIAudio to transcript audio files.

Customizing System Messages in Question Answering

When using the QuestionAnswering class, it is possible to customize the system message to guide the AI's response style and context sensitivity according to your specific needs. This feature allows you to enhance the interaction between the user and the AI, making it more tailored and responsive to specific scenarios.

Here's how you can set a custom system message:

Tools

This feature is amazing, and it is available for OpenAI, Anthropic and Ollama (just for a subset of its available models).

OpenAI has refined its model to determine whether tools should be invoked. To utilize this, simply send a description of the available tools to OpenAI, either as a single prompt or within a broader conversation.

In the response, the model will provide the called tools names along with the parameter values, if it deems the one or more tools should be called.

One potential application is to ascertain if a user has additional queries during a support interaction. Even more impressively, it can automate actions based on user inquiries.

We made it as simple as possible to use this feature.

Let's see an example of how to use it. Imagine you have a class that send emails.

You can create a FunctionInfo object that will describe your method to OpenAI. Then you can add it to the OpenAIChat object. If the response from OpenAI contains a tools' name and parameters, LLPhant will call the tool.

This PHP script will most likely call the sendMail method that we pass to OpenAI.

If you want to have more control about the description of your function, you can build it manually:

You can safely use the following types in the Parameter object: string, int, float, bool. The array type is supported but still experimental.

With AnthropicChat you can also tell to the LLM engine to use the results of the tool called locally as an input for the next inference.

Here is a simple example. Suppose we have a WeatherExample class with a currentWeatherForLocation method that calls an external service to get weather information.

This method gets in input a string describing the location and returns a string with the description of the current weather.

Embeddings

💡 Embeddings are used to compare two texts and see how similar they are. This is the base of semantic search.

An embedding is a vector representation of a text that captures the meaning of the text. It is a float array of 1536 elements for OpenAI for the small model.

To manipulate embeddings we use the Document class that contains the text and some metadata useful for the vector store.

The creation of an embedding follow the following flow:

Read data

The first part of the flow is to read data from a source. This can be a database, a csv file, a json file, a text file, a website, a pdf, a word document, an excel file, ... The only requirement is that you can read the data and that you can extract the text from it.

For now we only support text files, pdf and docx but we plan to support other data type in the future.

You can use the FileDataReader class to read a file. It takes a path to a file or a directory as parameter.

The second optional parameter is the class name of the entity that will be used to store the embedding.

The class needs to extend the Document class

and even the DoctrineEmbeddingEntityBase class (that extends the Document class) if you want to use the Doctrine vector store.

Here is an example of using a sample PlaceEntity class as document type:

If it's OK for you to use the default Document class, you can go this way:

To create your own data reader you need to create a class that implements the DataReader interface.

Document Splitter

The embeddings models have a limit of string size that they can process.

To avoid this problem we split the document into smaller chunks.

The DocumentSplitter class is used to split the document into smaller chunks.

Embedding Formatter

The EmbeddingFormatter is an optional step to format each chunk of text into a format with the most context.

Adding a header and links to other documents can help the LLM to understand the context of the text.

Embedding Generator

This is the step where we generate the embedding for each chunk of text by calling the LLM.

21 february 2024 : Adding VoyageAI embeddings

You need to have a VoyageAI account to use this API. More information on the VoyageAI website.

And you need to set up the VOYAGE_AI_API_KEY environment variable or pass it to the constructor of the Voyage3LargeEmbeddingGenerator class.

This is an example how to use it, just for the vector transformation:

For RAG optimization, you should be using the forRetrieval() and forStorage() methods:

Currently, some chains do not support the methods for storage and retrieval!

30 january 2024 : Adding Mistral embedding API

You need to have a Mistral account to use this API. More information on the Mistral website.

And you need to set up the MISTRAL_API_KEY environment variable or pass it to the constructor of the MistralEmbeddingGenerator class.

25 january 2024 : New embedding models and API updates OpenAI has 2 new models that can be used to generate embeddings. More information on the OpenAI Blog.

| Status | Model | Embedding size |

|---|---|---|

| Default | text-embedding-ada-002 | 1536 |

| New | text-embedding-3-small | 1536 |

| New | text-embedding-3-large | 3072 |

You can embed the documents using the following code:

You can also create a embedding from a text using the following code:

There is the OllamaEmbeddingGenerator as well, which has an embedding size of 1024.

VectorStores

Once you have embeddings you need to store them in a vector store. The vector store is a database that can store vectors and perform a similarity search. There are currently these vectorStore classes:

- MemoryVectorStore stores the embeddings in the memory

- FileSystemVectorStore stores the embeddings in a file

- DoctrineVectorStore stores the embeddings in a postgresql or in a MariaDB database. (require doctrine/orm)

- QdrantVectorStore stores the embeddings in a Qdrant vectorStore. (require hkulekci/qdrant)

- RedisVectorStore stores the embeddings in a Redis database. (require predis/predis)

- ElasticsearchVectorStore stores the embeddings in a Elasticsearch database. (require elasticsearch/elasticsearch)

- MilvusVectorStore stores the embeddings in a Milvus database.

- ChromaDBVectorStore stores the embeddings in a ChromaDB database.

- AstraDBVectorStore stores the embeddings in a AstraDBB database.

- OpenSearchVectorStore stores the embeddings in a OpenSearch database, which is a fork of Elasticsearch.

- TypesenseVectorStore stores the embeddings in a Typesense database.

Example of usage with the DoctrineVectorStore class to store the embeddings in a database:

Once you have done that you can perform a similarity search over your data. You need to pass the embedding of the text you want to search and the number of results you want to get.

To get full example you can have a look at Doctrine integration tests files.

VectorStores vs DocumentStores

As we have seen, a VectorStore is an engine that can be used to perform similarity searches on documents.

A DocumentStore is an abstraction around a storage for documents that can be queried with more classical methods.

In many cases vector stores can be also document stores and vice versa, but this is not mandatory.

There are currently these DocumentStore classes:

- MemoryVectorStore

- FileSystemVectorStore

- DoctrineVectorStore

- MilvusVectorStore

Those implementations are both vector stores and document stores.

Let's see the current implementations of vector stores in LLPhant.

Doctrine VectorStore

One simple solution for web developers is to use a postgresql database as a vectorStore with the pgvector extension. You can find all the information on the pgvector extension on its github repository.

We suggest you 3 simple solutions to get a postgresql database with the extension enabled:

In any case you will need to activate the extension:

Then you can create a table and store vectors. This sql query will create the table corresponding to PlaceEntity in the test folder.

⚠️ If the embedding length is not 1536 you will need to specify it in the entity by overriding the $embedding property.

Typically, if you use the OpenAI3LargeEmbeddingGenerator class, you will need to set the length to 3072 in the entity.

Or if you use the MistralEmbeddingGenerator class, you will need to set the length to 1024 in the entity.

The PlaceEntity

The same DoctrineVectorStore now supports also MariaDB, starting from version 11.7-rc.

Here you can find the queries needed to initialize the DB.

Redis VectorStore

Prerequisites :

- Redis server running (see Redis quickstart)

- Predis composer package installed (see Predis)

Then create a new Redis Client with your server credentials, and pass it to the RedisVectorStore constructor :

You can now use the RedisVectorStore as any other VectorStore.

Elasticsearch VectorStore

Prerequisites :

- Elasticsearch server running ( see Elasticsearch quickstart)

- Elasticsearch PHP client installed ( see Elasticsearch PHP client)

Then create a new Elasticsearch Client with your server credentials, and pass it to the ElasticsearchVectorStore constructor :

You can now use the ElasticsearchVectorStore as any other VectorStore.

Milvus VectorStore

Prerequisites : Milvus server running (see Milvus docs)

Then create a new Milvus client (LLPhant\Embeddings\VectorStores\Milvus\MiluvsClient) with your server credentials,

and pass it to the MilvusVectorStore constructor :

You can now use the MilvusVectorStore as any other VectorStore.

ChromaDB VectorStore

Prerequisites : Chroma server running (see Chroma docs). You can run it locally using this docker compose file.

Then create a new ChromaDB vector store (LLPhant\Embeddings\VectorStores\ChromaDB\ChromaDBVectorStore), for example:

You can now use this vector store as any other VectorStore.

AstraDB VectorStore

Prerequisites : an AstraDB account where you can create and delete databases (see AstraDB docs).

At the moment you can not run this DB it locally. You have to set ASTRADB_ENDPOINT and ASTRADB_TOKEN environment variables with data needed to connect to your instance.

Then create a new AstraDB vector store (LLPhant\Embeddings\VectorStores\AstraDB\AstraDBVectorStore), for example:

You can now use this vector store as any other VectorStore.

Typesense VectorStore

Prerequisites : Typesense server running (see Typesense). You can run it locally using this docker compose file.

Then create a new TypesenseDB vector store (LLPhant\Embeddings\VectorStores\TypeSense\TypesenseVectorStore), for example:

FileSystem VectorStore

Please note that this vector store is intended just for small tests. In a production environment you should consider to use a more effective engine. In a recent version (0.8.13) we modified the format of the vector store files. To use those files you have to convert them to the new format: convertFromOldFileFormat:

Question Answering

A popular use case of LLM is to create a chatbot that can answer questions over your private data.

You can build one using LLPhant using the QuestionAnswering class.

It leverages the vector store to perform a similarity search to get the most relevant information and return the answer generated by OpenAI.

Here is one example using the MemoryVectorStore:

Multy-Query query transformation

During the question answering process, the first step could transform the input query into something more useful for the chat engine.

One of these kinds of transformations could be the MultiQuery transformation.

This step gets the original query as input and then asks a query engine to reformulate it in order to have set of queries to use for retrieving documents

from the vector store.

Detect prompt injections

QuestionAnswering class can use query transformations to detect prompt injections.

The first implementation we provide of such a query transformation uses an online service provided by Lakera. To configure this service you have to provide a API key, that can be stored in the LAKERA_API_KEY environment variable. You can also customize the Lakera endpoint to connect to through the LAKERA_ENDPOINT environment variable. Here is an example.

RetrievedDocumentsTransformer and Reranking

The list of documents retrieved from a vector store can be transformed before sending them to the Chat as a context. One of these transformation can be a Reranking phase, that sorts documents based on relevance to the questions. The number of documents returned by the reranker can be less or equal that the number returned by the vector store. Here is an example:

Token Usage

You can get the token usage of the OpenAI API by calling the getTotalTokens method of the QA object.

It will get the number used by the Chat class since its creation.

Small to Big Retrieval

Small to Big Retrieval technique involves retrieving small, relevant chunks of text from a large corpus based on a query, and then expanding those chunks to provide a broader context for language model generation. Looking for small chunks of text first and then getting a bigger context is important for several reasons:

- Precision: By starting with small, focused chunks, the system can retrieve highly relevant information that is directly related to the query.

- Efficiency: Retrieving smaller units initially allows for faster processing and reduces the computational overhead associated with handling large amounts of text.

- Contextual richness: Expanding the retrieved chunks provides the language model with a broader understanding of the topic, enabling it to generate more comprehensive and accurate responses. Here is an example:

Using tools with QuestionAnswering

If you need to use tools with QuestionAnswering, having their results considered in the process of generating the answer, you need to use answerQuestionFromChat method:

Chat session (aka chat memory)

To automatically remember the chat session you can pass a ChatSession object to your QuestionAnswering. Here is an example:

ChatSession objects can also be serialized to JSON, so that you can put them into some kind of cache system between invocations.

AutoPHP

You can now make your AutoGPT clone in PHP using LLPhant. Have a look at the AutoPHP repository.

FAQ

Why use LLPhant and not directly the OpenAI PHP SDK ?

The OpenAI PHP SDK is a great tool to interact with the OpenAI API. LLphant will allow you to perform complex tasks like storing embeddings and perform a similarity search. It also simplifies the usage of the OpenAI API by providing a much more simple API for everyday usage.

Contributors

Thanks to our contributors:

All versions of llphant with dependencies

charescape/php-functions Version ^1.5|^2.0

guzzlehttp/guzzle Version ^7.4.5

guzzlehttp/psr7 Version ^2.7

html2text/html2text Version ^4.3

laravel/framework Version ^11.9

openai-php/client Version ^v0.10.3

phpoffice/phppresentation Version ^1.1.0

phpoffice/phpword Version ^1.3.0

psr/http-message Version ^1.1|^2.0

spatie/pdf-to-text Version ^1.54

symfony/mime Version ^7.1

thiagoalessio/tesseract_ocr Version ^2.13

smalot/pdfparser Version ^2.11