Download the PHP package scrapy/scrapy without Composer

On this page you can find all versions of the php package scrapy/scrapy. It is possible to download/install these versions without Composer. Possible dependencies are resolved automatically.

Download scrapy/scrapy

More information about scrapy/scrapy

Files in scrapy/scrapy

Package scrapy

Short Description PHP web scraping made easy.

License MIT

Homepage https://github.com/aleksa-sukovic/scrapy

Informations about the package scrapy

Scrapy

PHP web scraping made easy.

Please note: Documentation is always a work in progress, please excuse any errors.

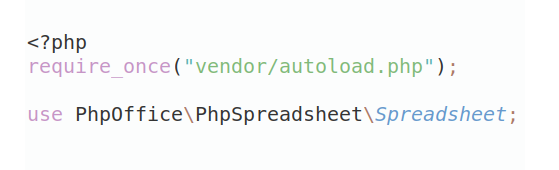

Installation

You can install the package via composer:

Table of contents

-

Basic Usage

-

Parsers

-

Parser definition

-

Adding parser

-

Inline parsers

- Passing additional parameters

-

-

Crawly

-

Initialisation

-

Methods

-

Filter

-

First

-

Nth

-

Raw

-

Trim

-

Pluck

-

Count

-

Int

-

Float

-

String

-

Html

-

Inner HTML

-

Exists

-

Reset

-

Map

- Node

-

-

-

Readers

-

Using built in readers

- Writing custom readers

-

-

User Agents

-

Why use custom agents

-

Using built in agents

- Writing custom user agents

-

-

Build steps precedence

-

Exception Handling

-

Testing

-

Changelog

-

Credits

- License

Basic usage

Scrapy is essentially a reader which can modify read data trough series of tasks. To simply read an url you can do the following.

Parsers

Just reading HTML from some source is not a lot of fun. Scrapy allows you to crawl HTML with simple yet expressive API relying on Symphony's DOM crawler.

You can think of parsers as actions meant to extract data valuable to you from HTML.

Parser definition

Parsers are meant to be self-containing scraping rules allowing you to extract data from HTML string.

Adding parsers

Once you have your parsers defined, it's time to add them to Scrapy.

Inline parsers

You don't have to write a class for each parser, you can also do inline parsing. Let's see how would that look.

Passing additional parameters to parsers

Sometimes you want to pass some extra context to your parsers. With Scrapy, you can pass an associative array of parameters which would become available to every parser.

The same principle applies no matter if you define parsers as separate classes or inline them with functions.

Crawly

You might noticed that first argument to parser's process method is instance Crawly class.

Crawly is an HTML crawling tool. It is based on Symphony's DOM Crawler.

Crawler initialisation

Instance of Crawly can be made from any string.

Crawling methods

Crawly provides few helper methods allowing you to more easily get the wanted data from HTML.

Filter

Allows you to filter elements with CSS selector. Similar to what document.querySelector('...') does.

First

Narrow your selection by taking the first element from it.

Nth

Narrow your selection by taking the nth element from it. Note that indices are 0-based;

Raw

Get access to Symphony's DOM crawler.

Crawly does not aim to replace Symphony's DOM crawler, rather just to make it's usage more pleasant. That's why not all methods are exposed directly trough Crawly.

Using raw method allows you to utilise the underlying Symphony's crawler.

Trim

Trims the output string.

Pluck

Extract attributes from selection.

Count

Returns the count of currently selected nodes.

Int

Returns the integer value of current selection

Float

Returns the integer value of current selection

String

Returns current selection's inner content as string.

Html

Returns HTML string representation of current selection, including the parent element.

Inner HTML

Returns HTML string representation of current selection, excluding the parent element.

Exists

Checks if given selection exists.

You can get boolean response or raise an exception.

Reset

Resets the crawler back to its original HTML.

Map

This method creates a new array populated with the results of calling a provided function on every node in a selection.

For each node a callback function is called with Crawly intance created from that node. Additionally, callback function takes second argument which is the 0-based index of a node.

Node

Returns the first DOMNode of the selection.

Readers

Readers are data source classes used by Scrapy to fetch the HTML content.

Scrapy comes with some readers predefined, and you can also write your own if you need to.

Using built in readers

Scrapy comes with two built in readers: UrlReader and FileReader. Lets see how you may use them.

As you can see built in readers allow you to use Scrapy by either reading from a url or from a specific file.

Writing custom readers

You don't have to be limited to built in readers. Writing you own is a piece of cake.

And then use it during the build process.

User agents

A user agent is a computer program representing a person, in this case a Scrapy instance. Scrapy provides several built in user agents for simulating different crawlers.

Why use custom user agents

User agents make sense only in a context of readers that fetch their data over HTTP protocol. More precisely, in cases where you want to read a web page that creates its content dynamically using JavaScript.

Scrapy by default can not parse JavaScript files. This is a problem all web crawlers face. There are numerous techniques for overcoming this problem, usually by using external services like Prerender which redirect crawling bots to cached HTML pages.

Several user agents are provided to allow Scrapy to represent itself as some of the common user agents. Please not that in case a web page implements more advance crawling security checks (for example an IP check) than provided checker would fail, since they only modify the HTTP request headers.

If you want to find out more, there is a great article on pre-rendering over at Netlify.

Using built in agents

Scrapy comes with few built in agents you can use.

Writing custom agents

Just like with readers, you can write your own custom user agents.

`

And then use it during the build process.

Precedence of parameters

One thing to note is the precedence of different parameters you may set during the build process.

Setting the url is same as setting the reader to be UrlReader with that url. On the other hand, explicitly setting reader will have higher precedence over explicitly setting the url and/or user agent.

Exception handling

In general, Scrapy tries to handle all possible exceptions wrapping them in base Scrapy exception class: ScrapeException.

What this means is that you can organize your app around a single exception for general error handling.

A more granular system is planned for future release which would allow you to react to a specific parser exceptions.

Testing

To run entire suite of unit tests you can do:

Changelog

Please see CHANGELOG for more information what has changed recently.

Credits

License

The MIT License (MIT). Please see License File for more information.

All versions of scrapy with dependencies

guzzlehttp/guzzle Version ~6.0

symfony/css-selector Version ^4.4

symfony/dom-crawler Version ^4.4

ext-dom Version *