Download the PHP package level23/druid-client without Composer

On this page you can find all versions of the php package level23/druid-client. It is possible to download/install these versions without Composer. Possible dependencies are resolved automatically.

Download level23/druid-client

More information about level23/druid-client

Files in level23/druid-client

Package druid-client

Short Description Druid php client for executing queries and more

License Apache-2.0

Informations about the package druid-client

Druid-Client

The goal of this project is to make it easy to select data from druid.

This project gives you an easy query builder to create the complex druid queries.

It also gives you a way to manage dataSources (tables) in druid and import new data from files.

Requirements

This package only requires Guzzle as dependency. The PHP and Guzzle version requirements are listed below.

| Druid Client Version | PHP Requirements | Guzzle Requirements | Druid Requirements |

|---|---|---|---|

| 4.* (current) | PHP 8.2 or higher | Version 7.0 | >= 28.0.0 |

| 3.* | PHP 8.2 or higher | Version 7.0 | |

| 2.* | PHP 7.4, 8.0, 8.1 and 8.2. | Version 6.2 or 7.* | |

| 1.* | PHP version 7.2, 7.4, 8.0, 8.1 and 8.2. | Version 6.2 or 7.* |

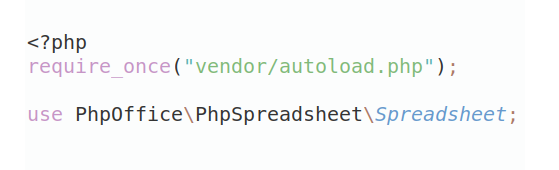

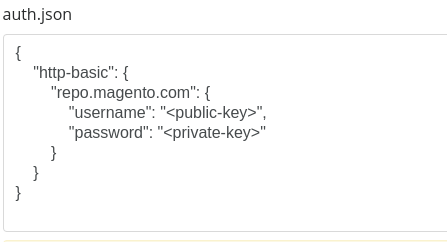

Installation

To install this package, you can use composer:

You can also download it as a ZIP file and include it in your project, as long as you have Guzzle also in your project.

ChangeLog and Upgrading

See CHANGELOG for changes in the different versions and how to upgrade to the latest version.

Laravel/Lumen support.

This package is Laravel/Lumen ready. It can be used in a Laravel/Lumen project, but it's not required.

Laravel

For Laravel the package will be auto discovered.

Lumen

If you are using a Lumen project, just include the service provider

in bootstrap/app.php:

Laravel/Lumen Configuration:

You should also define the correct endpoint URL's in your .env in your Laravel/Lumen project:

If you are using a Druid Router process, you can also just set the router url, which then will be used for the broker, overlord and the coordinator:

Todo's

- Support for building metricSpec and DimensionSpec in CompactTaskBuilder

- Implement hadoop based batch ingestion (indexing)

- Implement Avro Stream and Avro OCF input formats.

Examples

There are several examples which are written on the single-server tutorial of druid. See this page for more information.

Table of Contents

- DruidClient

- DruidClient::auth()

- DruidClient::query()

- DruidClient::lookup()

- DruidClient::cancelQuery()

- DruidClient::compact()

- DruidClient::reindex()

- DruidClient::pollTaskStatus()

- DruidClient::taskStatus()

- DruidClient::metadata()

- QueryBuilder: Generic Query Methods

- interval()

- limit()

- orderBy()

- orderByDirection()

- pagingIdentifier()

- subtotals()

- metrics()

- dimensions()

- toArray()

- toJson()

- QueryBuilder: Data Sources

- from()

- join()

- leftJoin()

- innerJoin()

- joinLookup()

- union()

- QueryBuilder: Dimension Selections

- select()

- lookup()

- inlineLookup()

- multiValueListSelect()

- multiValueRegexSelect()

- multiValuePrefixSelect()

- QueryBuilder: Metric Aggregations

- count()

- sum()

- min()

- max()

- first()

- last()

- any()

- javascript()

- hyperUnique()

- cardinality()

- distinctCount()

- doublesSketch()

- QueryBuilder: Filters

- where()

- orWhere()

- whereNot()

- orWhereNot()

- whereNull()

- orWhereNull()

- whereIn()

- orWhereIn()

- whereArrayContains()

- orWhereArrayContains()

- whereBetween()

- orWhereBetween()

- whereColumn()

- orWhereColumn()

- whereInterval()

- orWhereInterval()

- whereFlags()

- orWhereFlags()

- whereExpression()

- orWhereExpression()

- whereSpatialRectangular()

- whereSpatialRadius()

- whereSpatialPolygon()

- orWhereSpatialRectangular()

- orWhereSpatialRadius()

- orWhereSpatialPolygon()

- QueryBuilder: Having Filters

- having()

- orHaving()

- QueryBuilder: Virtual Columns

- virtualColumn()

- selectVirtual()

- QueryBuilder: Post Aggregations

- fieldAccess()

- constant()

- expression

- divide()

- multiply()

- subtract()

- add()

- quotient()

- longGreatest() and doubleGreatest()

- longLeast() and doubleLeast()

- postJavascript()

- hyperUniqueCardinality()

- quantile()

- quantiles()

- histogram()

- rank()

- cdf()

- sketchSummary()

- QueryBuilder: Search Filters

- searchContains()

- searchFragment()

- searchRegex()

- QueryBuilder: Execute The Query

- execute()

- groupBy()

- topN()

- selectQuery()

- scan()

- timeseries()

- search()

- LookupBuilder: Generic Methods

- all()

- names()

- introspect()

- keys()

- values()

- tiers()

- store()

- delete()

- LookupBuilder: Building Lookups

- uri()

- uriPrefix()

- kafka()

- jdbc()

- map()

- maxHeapPercentage()

- pollPeriod()

- injective()

- firstCacheTimeout()

- LookupBuilder: Lookup Parse Specifications

- tsv()

- csv()

- json()

- customJson()

- Metadata

- intervals

- interval

- structure

- timeBoundary

- dataSources

- rowCount

- Reindex/compact data/kill

- compact()

- reindex()

- kill()

- Importing data using a batch index job

- Input Sources

- AzureInputSource

- GoogleCloudInputSource

- S3InputSource

- HdfsInputSource

- HttpInputSource

- Input Formats

- csvFormat()

- tsvFormat()

- jsonFormat()

- orcFormat()

- parquetFormat()

- protobufFormat()

Documentation

Here is an example of how you can use this package.

Please see the inline comment for more information / feedback.

Example:

DruidClient

The DruidClient class is the class where it all begins. You initiate an instance of the druid client, which holds the

configuration of your instance.

The DruidClient constructor has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| array | Required | $config |

['router_url' => 'http://my.url'] |

The configuration which is used for this DruidClient. This configuration contains the endpoints where we should send druid queries to. |

GuzzleHttp\Client |

Optional | $client |

See example below | If given, we will this Guzzle Client for sending queries to your druid instance. This allows you to control the connection. |

For a complete list of configuration settings take a look at the default values which are defined in the

$config property in the DruidClient class.

This class supports some newer functions of Druid. To make sure your server supports these functions, it is recommended

to supply the version config setting.

By default, we will use a guzzle client for handing the connection between your application and the druid server. If you want to change this, for example because you want to use a proxy, you can do this with a custom guzzle client.

Example of using a custom guzzle client:

The DruidClient class gives you various methods. The most commonly used is the query() method, which allows you

to build and execute a query.

DruidClient::auth()

If you have configured your Druid cluster with authentication, you can supply your username/password with this method. The username/password will be sent in the requests as HTTP Basic Auth parameters.

See also: https://druid.apache.org/docs/latest/operations/auth/

The auth() method has 2 parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $username |

"foo" | The username used for authentication. |

| string | Required | $password |

"bar" | The password used for authentication. |

You can also overwrite the client and use your own mechanism. See DruidClient.

DruidClient::query()

The query() method gives you a QueryBuilder instance, which allows you to build a query and then execute it.

Example:

The query method has 2 parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Optional | $dataSource |

"wikipedia" | The name of the dataSource (table) which you want to query. |

| string | Optional | $granularity |

"all" | The granularity which you want to use for this query. You can think of this like an extra "group by" per time window. The results will be grouped by this time window. By default we will use "all", which will return the resultSet in 1 set. Valid values are: all, none, second, minute, fifteen_minute, thirty_minute, hour, day, week, month, quarter and year |

The QueryBuilder allows you to select dimensions, aggregate metric data, apply filters and having filters, etc.

When you do not specify the dataSource, you need to specify it later on your query builder. There are various methods to do this. See QueryBuilder: Data Sources

See the following chapters for more information about the query builder.

DruidClient::lookup()

The lookup() method gives you a LookupBuilder instance, which allows you to create/update, list or delete lookups.

A lookup is a key-value list which is kept in-memory in druid. During queries, you can use these lists to transform

certain data.

For example, change a user_id to a human-readable name.

See also LookupBuilder: Generic Methods.

Example:

DruidClient::cancelQuery()

The cancelQuery() method gives you the ability to cancel a query. To cancel a query, you must know its unique

identifier.

When you execute a query, you can specify the unique identifier yourself in the query context.

Example:

You can now cancel this query within another process. If you for example store the running queries somewhere, you can "stop" the running queries by executing this:

The query method has 1 parameter:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $identifier |

"myqueryid" | The unique query identifier which was given in the query context. |

If the cancellation fails, the method will throw an exception. Otherwise, it will not return any result.

See also: https://druid.apache.org/docs/latest/querying/querying.html#query-cancellation

DruidClient::compact()

The compact() method returns a CompactTaskBuilder object which allows you to build a compact task.

For more information, see compact().

DruidClient::reindex()

The compact() method returns a IndexTaskBuilder object which allows you to build a re-index task.

For more information, see reindex().

DruidClient::taskStatus()

The taskStatus() method allows you to fetch the status of a task identifier.

For more information and an example, see compact().

DruidClient::pollTaskStatus()

The pollTaskStatus() method allows you to wait until the status of a task is other than RUNNING.

For more information and an example, see compact().

DruidClient::metadata()

The metadata() method returns a MetadataBuilder object, which allows you to retrieve metadata from your druid

instance. See for more information the Metadata chapter.

QueryBuilder: Generic Query Methods

Here we will describe some methods which are generic and can be used by (almost) all queries.

interval()

Because Druid is a TimeSeries database, you always need to specify between which times you want to query. With this method you can do just that.

The interval method is very flexible and supports various argument formats.

All these examples are valid:

The start date should be before the end date. If not, an InvalidArgumentException will be thrown.

You can call this method multiple times to select from various data sets.

The interval() method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string/int/DateTime | Required | $start |

"now - 24 hours" | The start date from where we will query. See the examples above which formats are allowed. |

| /string/int/DateTime/null | Optional | $stop |

"now" | The stop date from where we will query. See the examples above which formats are allowed. When a string containing a slash is given as start date, the stop date can be left out. |

limit()

The limit() method allows you to limit the result set of the query.

The Limit can be used for all query types. However, its mandatory for the TopN Query and the Select Query.

The $offset parameter only applies to GroupBy and Scan queries and is only supported since druid version 0.20.0.

Skip this many rows when returning results. Skipped rows will still need to be generated internally and then discarded, meaning that raising offsets to high values can cause queries to use additional resources.

Together, $limit and $offset can be used to implement pagination. However, note that if the underlying datasource is

modified in between page fetches in ways that affect overall query results, then the different pages will not

necessarily align with each other.

Example:

The limit() method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| int | Required | $limit |

50 | Limit the result to this given number of records. |

| int | Optional | $offset |

10 | Skip this many rows when returning results. |

orderBy()

The orderBy() method allows you to order the result in a given way.

This method only applies to GroupBy and TopN Queries. You should use orderByDirection().

Example:

The orderBy() method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimensionOrMetric |

"channel" | The dimension or metric where you want to order by |

| string | Optional | $direction |

OrderByDirection::DESC |

The direction or your order. You can use an OrderByDirection constant, or a string like "asc" or "desc". Default "asc" |

| string | Optional | $sortingOrder |

SortingOrder::STRLEN |

This defines how the sorting is executed. |

See for more information about SortingOrders this page: https://druid.apache.org/docs/latest/querying/sorting-orders.html

Please note: this method differs per query type. Please read below how this method workers per Query Type.

GroupBy Query

You can call this method multiple times, adding an order-by to the query.

The GroupBy Query only allows ordering the result if there is a limit is given. If you do not supply a limit, we will

use

a default limit of 999999.

TopN Query

For this query type it is mandatory to call this method. You should call this method with the dimension or metric where you want to order your result by.

orderByDirection()

The orderByDirection() method allows you to specify the direction of the order by. This method only applies to the

TimeSeries, Select and Scan Queries. Use orderBy() For GroupBy and TopN Queries.

Example:

The orderByDirection() method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $direction |

OrderByDirection::DESC |

The direction or your order. You can use an OrderByDirection constant, or a string like "asc" or "desc". |

pagingIdentifier()

The pagingIdentifier() allows you to do paginating on the result set. This only works on SELECT queries.

When you execute a select query, you will return a paging identifier. To request the next "page", use this paging identifier in your next request.

Example:

A paging identifier is an array and looks something like this:

The pagingIdentifier() method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| array | Required | $pagingIdentifier |

See above. | The paging identifier from your previous request. |

subtotals()

The subtotals() method allows you to retrieve your aggregations over various dimensions in your query. This is quite

similar to the WITH ROLLUP mysql logic.

NOTE:: This method only applies to groupBy queries!

Example:

Example response (Note: result is converted to a table for better visibility):

As you can see, the first three records are our result per 'hour' and 'namespace'.

Then, two records are just per 'hour'.

Finally, the last record is the 'total'.

The subtotals() method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| array | Required | $subtotals |

[ ['country', 'city'], ['country'], [] ] |

An array which contains array's with dimensions where you want to receive your totals for. See example above. |

metrics()

With the metrics() method you can specify which metrics you want to select when you are executing a selectQuery().

NOTE: This only applies to the select query type!

Example:

The metrics() method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| array | Required | $metrics |

['added', 'deleted'] |

Array of metrics which you want to select in your select query. |

dimensions()

With the dimensions() method you can specify which dimensions should be used for a Search Query.

NOTE: This only applies to the search query type! See also the Search query. This method should not be confused with selecting dimensions for your other query types. See Dimension Selections for more information about selecting dimensions for your query.

The dimensions() method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| array | Required | $dimensions |

['name', 'address'] |

Array of dimensions where you want to search in. |

toArray()

The toArray() method will try to build the query. We will try to auto-detect the best query type. After that, we will

build the query and return the query as an array.

Example:

The toArray() method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| array/QueryContext | Optional | $context |

['priority' => 75] | Query context parameters. |

toJson()

The toJson() method will try to build the query. We will try to auto-detect the best query type. After that, we will

build the query and return the query as a JSON string.

Example:

The toJson() method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| array/QueryContext | Optional | $context |

['priority' => 75] | Query context parameters. |

QueryBuilder: Data Sources

By default, you will specify the dataSource where you want to select your data from with the query. For example:

In this chapter we will explain how to change it dynamically, or, for example, join other dataSources.

from()

You can use this method to override / change the currently used dataSource (if any).

You can supply a string, which will be interpreted as a druid dataSource table.

You can also specify an object which implements the DataSourceInterface.

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string/DataSourceInterface | Required | $dataSource |

hits | The dataSource which you want to use to retrieve druid data from. |

fromInline()

Inline datasources allow you to query a small amount of data that is embedded in the query itself. They are useful when you want to write a query on a small amount of data without loading it first. They are also useful as inputs into a join.

Each row is an array that must be exactly as long as the list of columnNames. The first element in each row corresponds to the first column in columnNames, and so on.

See also: https://druid.apache.org/docs/latest/querying/datasource.html#inline

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| array | Required | $columnNames |

["country", "city"] | The column names of the data you will supply. |

| array | Required | $rows |

[ ["United States", "San Francisco"], ["Canada", "Calgary"]] | The rows of data which will be used. Each row has to have as much items as the number of names in $columnNames. |

join()

With this method you can join another dataSource. This is available since druid version 0.23.0.

Please be aware that joins are executed as a subquery in Druid, which may have a substantial effect on the performance.

See:

- https://druid.apache.org/docs/latest/querying/datasource.html#join

- https://druid.apache.org/docs/latest/querying/query-execution.html#join

You can also specify a sub-query as a join. For example:

You can also specify another DataSource as value. For example, you can create a new JoinDataSource object and pass

that as value. However, there are easy methods created for this (for example joinLookup()) so you probably

do not have to use this. It might be usefully for whe you want to join with inline data (you can use

the InlineDataSource)

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string/DataSourceInterface/Closure | Required | $dataSourceOrClosure |

countries | The name of the dataSource which you want to join. You can also specify a Closure. Please see above. |

| string | Required | $as |

alias | The alias name as this dataSource is accessible in your query. |

| Closure | Required | $condition |

alias.a = main.a | Here you can specify the condition of the join |

| string | Optional | $joinType |

INNER | The join type. This can either be INNER or LEFT. |

leftJoin()

This works the same as the join() method, but the joinType will always be LEFT.

innerJoin()

This works the same as the join() method, but the joinType will always be INNER.

joinLookup()

With this method you can join a lookup as a dataSource.

Lookup datasources are key-value oriented and always have exactly two columns: k (the key) and v (the value), and both are always strings.

Example:

See: https://druid.apache.org/docs/latest/querying/datasource.html#lookup

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $lookupName |

departments | The name of the lookup which you want to join. |

| string | Required | $as |

alias | The alias name as this dataSource is accessible in your query. |

| Closure | Required | $condition |

alias.a = main.a | Here you can specify the condition of the join |

| string | Optional | $joinType |

INNER | The join type. This can either be INNER or LEFT. |

union()

Unions allow you to treat two or more tables as a single datasource. In SQL, this is done with the UNION ALL operator applied directly to tables, called a "table-level union". In native queries, this is done with a "union" datasource.

With the native union datasource, the tables being unioned do not need to have identical schemas. If they do not fully match up, then columns that exist in one table but not another will be treated as if they contained all null values in the tables where they do not exist.

In either case, features like expressions, column aliasing, JOIN, GROUP BY, ORDER BY, and so on cannot be used with table unions.

See: https://druid.apache.org/docs/latest/querying/datasource.html#union

Example:

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string or array | Required | $dataSources |

["hits_eu", "hits_us"] | The name of the dataSources which you want to query. NOTE! The current dataSource is automatically added! |

| bool | Optional | $append |

true | This controls if the currently used dataSource should be added to this list or not. This only works if the current dataSource is a table dataSource. |

QueryBuilder: Dimension Selections

Dimensions are fields where you normally filter on, or Group data by. Typical examples are: Country, Name, City, etc.

To select a dimension, you can use one of the methods below:

select()

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string or array | Required | $dimension |

country_iso | The dimension which you want to select |

| string | Optional | $as |

country | The name where the result will be available by in the result set. |

| string | Optional | $outputType |

string | The output type of the data. If left unspecified, we will use string. |

This method allows you to select a dimension in various way's, as shown in the example above.

You can use:

Simple dimension selection:

Dimension selection with an alternative output name:

Select various dimensions at once:

Select various dimensions with alternative output names at once:

Change the output type of the dimension:

lookup()

This method allows you to look up a dimension using a registered lookup function. See more about registered lookup functions on these pages:

- https://druid.apache.org/docs/latest/querying/lookups.html

- https://druid.apache.org/docs/latest/development/extensions-core/lookups-cached-global.html

Lookups are a handy way to transform an ID value into a user readable name, like transforming a user_id into the

username, without having to store the username in your dataset.

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $lookupFunction |

username_by_id | The name of the lookup function which you want to use for this dimension. |

| string | Required | $dimension |

user_id | The dimension which you want to transform. |

| string | Optional | $as |

username | The name where the result will be available by in the result set. |

| bool/string | Optional | $keepMissingValue |

Unknown | When the user_id dimension could not be found, what do you want to do? Use false for remove the value from the result, use true to keep the original dimension value (the user_id). Or, when a string is given, we will replace the value with the given string. |

Example:

inlineLookup()

This method allows you to look up a dimension using a predefined list.

Lookups are a handy way to transform an ID value into a user readable name, like transforming a category_id into the

category, without having to store the category in your dataset.

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| array | Required | $map |

[1 => "IT", 2 => "Finance"] |

The list with key => value items, where the dimensions value will be used to find the value in the list. |

| string | Required | $dimension |

user_id | The dimension which you want to transform. |

| string | Optional | $as |

username | The name where the result will be available by in the result set. |

| bool/string | Optional | $keepMissingValue |

Unknown | When the user_id dimension could not be found, what do you want to do? Use false for remove the value from the result, use true to keep the original dimension value (the user_id). Or, when a string is given, we will replace the value with the given string. |

| bool | Optional | $isOneToOne |

true | Set to true if the key/value items are unique in the given map. |

Example:

multiValueListSelect()

This dimension spec retains only the values that are present in the given list.

See:

- https://druid.apache.org/docs/latest/querying/multi-value-dimensions.html

- https://druid.apache.org/docs/latest/querying/dimensionspecs.html#filtered-dimensionspecs

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

tags | The name of the dimension which contains multiple values. |

| array | Required | $values |

['a', 'b', 'c'] | Only use the values in the multi-value dimension which are present in this list. |

| string | Optional | $as |

myTags | The name where the result will be available by in the result set. |

| string/DataType | Optional | $outputType |

DataType::STRING | The data type of the dimension value. |

Example:

multiValueRegexSelect()

This dimension spec retains only the values matching a regex.

See:

- https://druid.apache.org/docs/latest/querying/multi-value-dimensions.html

- https://druid.apache.org/docs/latest/querying/dimensionspecs.html#filtered-dimensionspecs

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

tags | The name of the dimension which contains multiple values. |

| string | Required | $regex |

"^ab" | The java regex pattern for the values which should be used in the resultset. |

| string | Optional | $as |

myTags | The name where the result will be available by in the result set. |

| string/DataType | Optional | $outputType |

DataType::STRING | The data type of the dimension value. |

Example:

multiValuePrefixSelect()

This dimension spec retains only the values matching the given prefix.

See:

- https://druid.apache.org/docs/latest/querying/multi-value-dimensions.html

- https://druid.apache.org/docs/latest/querying/dimensionspecs.html#filtered-dimensionspecs

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

tags | The name of the dimension which contains multiple values. |

| string | Required | $prefix |

test | Return all multi-value items which start with this given prefix. |

| string | Optional | $as |

myTags | The name where the result will be available by in the result set. |

| string/DataType | Optional | $outputType |

DataType::STRING | The data type of the dimension value. |

Example:

QueryBuilder: Metric Aggregations

Metrics are fields which you normally aggregate, like summing the values of this field, Typical examples are:

- Revenue

- Hits

- NrOfTimes Clicked / Watched / Bought

- Conversions

- PageViews

- Counters

To aggregate a metric, you can use one of the methods below.

All the metrics aggregations do support a filter selection. If this is given, the metric aggregation will only be applied to the records where the filters match.

Example:

See also this page: https://druid.apache.org/docs/latest/querying/aggregations.html

This method uses the following arguments:

count()

This aggregation will return the number of rows which match the filters.

Please note the count aggregator counts the number of Druid rows, which does not always reflect the number of raw events ingested. This is because Druid can be configured to roll up data at ingestion time. To count the number of ingested rows of data, include a count aggregator at ingestion time, and a longSum aggregator at query time.

Example:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $as |

"nrOfRows" | The size of the bucket where the numerical values are grouped in |

| Closure | Optional | $filterBuilder |

See example in the beginning of this chapter | A closure which receives a FilterBuilder. When given, we will only count the records which match with the given filter. |

sum()

The sum() aggregation computes the sum of values as a 64-bit, signed integer.

Note: Alternatives are: longSum(), doubleSum() and floatSum(), which allow you to directly specify the output

type by using the appropriate method name. These methods do not have the $type parameter.

Example:

The sum() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $metric |

"views" | The metric which you want to sum |

| string | Optional | $as |

"totalViews" | The name which will be used in the output result |

| string/DataType | Optional | $type |

DataType::LONG | The output type of the sum. This can either be long, float or double. See also the DataType enum |

| Closure | Optional | $filterBuilder |

See example in the beginning of this chapter | A closure which receives a FilterBuilder. When given, we will only sum the records which match with the given filter. |

min()

The min() aggregation computes the minimum of all metric values.

Note: Alternatives are: longMin(), doubleMin() and floatMin(), which allow you to directly specify the output

type by using the appropriate method name. These methods do not have the $type parameter.

Example:

The min() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $metric |

"views" | The metric which you want to calculate the minimum value of. |

| string | Optional | $as |

"totalViews" | The name which will be used in the output result |

| string/DataType | Optional | $type |

DataType::LONG | The output type. This can either be long, float or double. See also the DataType enum |

| Closure | Optional | $filterBuilder |

See example in the beginning of this chapter | A closure which receives a FilterBuilder. When given, we will only calculate the minimum value of the records which match with the given filter. |

max()

The max() aggregation computes the maximum of all metric values.

Note: Alternatives are: longMax(), doubleMax() and floatMax(), which allow you to directly specify the output

type by using the appropriate method name. These methods do not have the $type parameter.

Example:

The max() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $metric |

"views" | The metric which you want to calculate the maximum value of. |

| string | Optional | $as |

"totalViews" | The name which will be used in the output result |

| string/DataType | Optional | $type |

DataType::LONG | The output type. This can either be long, float or double. See also the DataType enum |

| Closure | Optional | $filterBuilder |

See example in the beginning of this chapter | A closure which receives a FilterBuilder. When given, we will only calculate the maximum value of the records which match with the given filter. |

first()

The first() aggregation computes the metric value with the minimum timestamp or 0 if no row exist.

Note: Alternatives are: longFirst(), doubleFirst(), floatFirst() and stringFirst(), which allow you to

directly specify the output type by using the appropriate method name. These methods do not have the $type parameter.

Example:

The first() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $metric |

"device" | The metric which you want to compute the first value of. |

| string | Optional | $as |

"firstDevice" | The name which will be used in the output result |

| string/DataType | Optional | $type |

DataType::LONG | The output type. This can either be string, long, float or double. See also the DataType enum. |

| Closure | Optional | $filterBuilder |

See example in the beginning of this chapter | A closure which receives a FilterBuilder. When given, we will only compute the first value of the records which match with the given filter. |

last()

The last() aggregation computes the metric value with the maximum timestamp or 0 if no row exist.

Note that queries with last aggregators on a segment created with rollup enabled will return the rolled up value, and not the last value within the raw ingested data.

Note: Alternatives are: longLast(), doubleLast(), floatLast() and stringLast(), which allow you to

directly specify the output type by using the appropriate method name. These methods do not have the $type parameter.

Example:

The last() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $metric |

"device" | The metric which you want to compute the last value of. |

| string | Optional | $as |

"firstDevice" | The name which will be used in the output result |

| string/DataType | Optional | $type |

DataType::LONG | The output type. This can either be string, long, float or double. See also the DataType enum. |

| Closure | Optional | $filterBuilder |

See example in the beginning of this chapter | A closure which receives a FilterBuilder. When given, we will only compute the last value of the records which match with the given filter. |

any()

The any() aggregation will fetch any metric value. This can also be null.

Note: Alternatives are: longAny(), doubleAny(), floatAny() and stringAny(), which allow you to

directly specify the output type by using the appropriate method name. These methods do not have the $type parameter.

Example:

The any() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $metric |

"device" | The metric which you want to compute the last value of. |

| string | Optional | $as |

"anyDevice" | The name which will be used in the output result |

| string/DataType | Optional | $type |

DataType::STRING | The output type. This can either be string, long, float or double. See DataType enum |

| int | Optional | $maxStringBytes |

2048 | Then the type is string, you can specify here the max bytes of the string. Defaults to 1024. |

| Closure | Optional | $filterBuilder |

See example in the beginning of this chapter | A closure which receives a FilterBuilder. When given, we will only compute the last value of the records which match with the given filter. |

javascript()

The javascript() aggregation computes an arbitrary JavaScript function over a set of columns (both metrics and

dimensions are allowed). Your JavaScript functions are expected to return floating-point values.

NOTE: JavaScript-based functionality is disabled by default. Please refer to the Druid JavaScript programming guide for guidelines about using Druid's JavaScript functionality, including instructions on how to enable it: https://druid.apache.org/docs/latest/development/javascript.html

Example:

The javascript() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $as |

"result" | The name which will be used in the output result |

| array | Required | $fieldNames |

["metric_field", "dimension_field"] | The columns which will be given to the fnAggregate function. Both metrics and dimensions are allowed. |

| string | Required | $fnAggregate |

See example above. | A javascript function which does the aggregation. This function will receive the "current" value as first parameter. The other parameters will be the values of the columns as given in the $fieldNames parameter. |

| string | Required | $fnCombine |

See example above. | A function which can combine two aggregation results. |

| string | Required | $fnReset |

See example above. | A function which will reset a value. |

| Closure | Optional | $filterBuilder |

See example in the beginning of this chapter | A closure which receives a FilterBuilder. When given, we will only apply the javascript function to the records which match with the given filter. |

hyperUnique()

The hyperUnique() aggregation uses HyperLogLog to compute the estimated cardinality of a dimension that has been

aggregated as a "hyperUnique" metric at indexing time.

Please note: use distinctCount() when the Theta Sketch extension is available, as it is much faster.

See this page for more information: https://druid.apache.org/docs/latest/querying/hll-old.html#hyperunique-aggregator

This page also explains the usage of hyperUnique very well: https://cleanprogrammer.net/getting-unique-counts-from-druid-using-hyperloglog/

Example:

The hyperUnique() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $metric |

"dimension" | The dimension that has been aggregated as a "hyperUnique" metric at indexing time. |

| string | Required | $as |

"myField" | The name which will be used in the output result |

| bool | Optional | $round |

true | TheHyperLogLog algorithm generates decimal estimates with some error. "round" can be set to true to round off estimated values to whole numbers. Note that even with rounding, the cardinality is still an estimate. |

| bool | Optional | $isInputHyperUnique |

false | Only affects ingestion-time behavior, and is ignored at query-time. Set to true to index pre-computed HLL (Base64 encoded output from druid-hll is expected). |

cardinality()

The cardinality() aggregation computes the cardinality of a set of Apache Druid (incubating) dimensions,

using HyperLogLog to estimate the cardinality.

Please note: use distinctCount() when the Theta Sketch extension is available, as it is much faster.

This aggregator will also be much slower than indexing a column with the hyperUnique() aggregator.

In general, we strongly recommend using the distinctCount() or hyperUnique() aggregator instead of

the cardinality()

aggregator if you do not care about the individual values of a dimension.

When setting $byRow to false (the default) it computes the cardinality of the set composed of the union of al

dimension values for all the given dimensions. For a single dimension, this is equivalent to:

For multiple dimensions, this is equivalent to something akin to

When setting $byRow to true it computes the cardinality by row, i.e. the cardinality of distinct dimension

combinations. This is equivalent to something akin to

For more information, see https://druid.apache.org/docs/latest/querying/hll-old.html#cardinality-aggregator.

Example:

You can also use a Closure function, which will receive a DimensionBuilder. In this way you can build more complex

situations, for example:

The cardinality() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $as |

"distinctCount" | The name which will be used in the output result |

| Closure/array | Required | $dimensionsOrDimensionBuilder |

See example above. | An array with dimension(s) or a function which receives an instance of the DimensionBuilder class. You should select the dimensions which you want to use to calculate the cardinality over. |

| bool | Optional | $byRow |

false | See above for more info. |

| bool | Optional | $round |

true | TheHyperLogLog algorithm generates decimal estimates with some error. "round" can be set to true to round off estimated values to whole numbers. Note that even with rounding, the cardinality is still an estimate. |

distinctCount()

The distinctCount() aggregation function computes the distinct number of occurrences of the given dimension.

This method uses the Theta Sketch extension, and it should be enabled to make use of this aggregator.

For more information, see: https://druid.apache.org/docs/latest/development/extensions-core/datasketches-theta.html

Example:

The distinctCount() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

"category_id" | The dimension where you want to count the distinct values from. |

| string | Optional | $as |

"categoryCount" | The name which will be used in the output result |

| int | Optional | $size |

16384 | Must be a power of 2. Internally, size refers to the maximum number of entries sketch object will retain. Higher size means higher accuracy but more space to store sketches. |

| Closure | Optional | $filterBuilder |

See example in the beginning of this chapter | A closure which receives a FilterBuilder. When given, we will only count the records which match with the given filter. |

doublesSketch()

The doublesSketch() aggregation function will create a DoubleSketch data field which can be used by various

post aggregation methods to do extra calculations over the collected data.

DoubleSketch is a mergeable streaming algorithm to estimate the distribution of values, and approximately answer queries about the rank of a value, probability mass function of the distribution (PMF) or histogram, cumulative distribution function (CDF), and quantiles (median, min, max, 95th percentile and such).

This method uses the datasketches extension, and it should be enabled to make use of this aggregator.

For more information, see: https://druid.apache.org/docs/latest/development/extensions-core/datasketches-quantiles.html

Example:

To view more information about the doubleSketch data, see the sketchSummary() post aggregation method.

The doublesSketch() aggregation method has the following parameters:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $metric |

"salary" |

The metric where you want to do calculations over. |

| string | Optional | $as |

"salaryData" |

The name which will be used in the output result. |

| int | Optional | $sizeAndAccuracy |

128 | Parameter that determines the accuracy and size of the sketch. Higher k means higher accuracy but more space to store sketches. Must be a power of 2 from 2 to 32768. See accuracy information in the DataSketches documentation for details. |

| int | Optional | $maxStreamLength |

1000000000 | This parameter is a temporary solution to avoid a known issue. It may be removed in a future release after the bug is fixed. This parameter defines the maximum number of items to store in each sketch. If a sketch reaches the limit, the query can throw IllegalStateException. To workaround this issue, increase the maximum stream length. See accuracy information in the DataSketches documentation for how many bytes are required per stream length. |

QueryBuilder: Filters

With filters, you can filter on certain values. The following filters are available:

where()

This is probably the most used filter. It is very flexible.

This method uses the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

"cityName" | The dimension which you want to filter. |

| string | Required | $operator |

"=" | The operator which you want to use to filter. See below for a complete list of supported operators. |

| mixed | Optional | $value |

"Auburn" | The value which you want to use in your filter comparison. Set to null to match against NULL values. |

| string | Optional | $boolean |

"and" / "or" | This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

The following $operator values are supported:

| Operator | Description |

|---|---|

| = | Check if the dimension is equal to the given value. |

| != | Check if the dimension is not equal to the given value. |

| <> | Same as != |

| > | Check if the dimension is greater than the given value. |

| >= | Check if the dimension is greater than or equal to the given value. |

| < | Check if the dimension is less than the given value. |

| <= | Check if the dimension is less than or equal to the given value. |

| like | Check if the dimension matches a SQL LIKE expression. Special characters supported are "%" (matches any number of characters) and "_" (matches any one character). |

| not like | Same as like, only now the dimension should not match. |

| javascript | Check if the dimension matches by using the given javascript function. The function takes a single argument, the dimension value, and returns either true or false. |

| not javascript | Same as javascript, only now the dimension should not match. |

| regex | Check if the dimension matches the given regular expression. |

| not regex | Check if the dimension does not match the given regular expression. |

| search | Check if the dimension partially matches the given string(s). When an array of values are given, we expect the dimension value contains all of the values specified in this search query spec. |

| not search | Same as search, only now the dimension should not match. |

This method supports a quick equals shorthand. Example:

Is the same as

We also support using a Closure to group various filters in 1 filter. It will receive a FilterBuilder. For example:

This would be the same as an SQL equivalent:

As last, you can also supply a raw filter object. For example:

However, this is not recommended and should not be needed.

orWhere()

Same as where(), but now we will join previous added filters with a or instead of an and.

whereNot()

With this filter you can build a filterset which should NOT match. It is thus inverted.

Example:

You can use this in combination with all the other filters!

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| Closure | Required | $filterBuilder |

"flags" | A closure function which will receive a FilterBuilder object. All applied filters will be inverted. |

| string | Optional | $boolean |

"and" | This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

orWhereNot()

Same as whereNot(), but now we will join previous added filters with a or instead of an and.

whereNull()

Druid has changed its NULL handling. You can now configure it to store NULL values by configuring

druid.generic.useDefaultValueForNull=false.

If this is configured, you can filter on NULL values with this filter.

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $column |

"city" | The column or virtual column which you want to filter on null values. |

| string | Optional | $boolean |

"and" | This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

Example:

orWhereNull()

Same as whereNull(), but now we will join previous added filters with a or instead of an and.

whereIn()

With this method you can filter on records using multiple values.

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

country_iso | The dimension which you want to filter |

| array | Required | $items |

["it", "de", "au"] | A list of values. We will return records where the dimension is in this list. |

| string | Optional | $boolean |

"and" | This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

Example:

orWhereIn()

Same as whereIn(), but now we will join previous added filters with a or instead of an and.

whereArrayContains()

With this method you can filter if an array contains a given element.

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $column |

country_iso | Input column or virtual column name to filter on. |

| int/string/float/null | Required | $value |

"it" | Array element value to match. This value can be null. |

| string | Optional | $boolean |

"and" | This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

Example:

orWhereArrayContains()

Same as whereArrayContains(), but now we will join previous added filters with a or instead of an and.

whereBetween()

This filter will select records where the given dimension is greater than or equal to the given $minValue, and

less than the given $maxValue.

The SQL equivalent would be:

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

year | The dimension which you want to filter |

| int/float/string | Required | $minValue |

1990 | The minimum value where the dimension should match. It should be equal or greater than this value. |

| int/float/string | Required | $maxValue |

2000 | The maximum value where the dimension should match. It should be less than this value. |

| DataType | Optional | $valueType |

DataType::FLOAT |

This determines how druid will interprets the min and max values in comparison with the existing values. When not given we will auto detect it. |

| string | Optional | $boolean |

"and" | This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

orWhereBetween()

Same as whereBetween(), but now we will join previous added filters with a or instead of an and.

whereColumn()

The whereColumn() filter compares two dimensions with each other. Only records where the dimensions match will be

returned.

The whereColumn() filter has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string/Closure | Required | $dimensionA |

"initials" | The dimension which you want to compare, or a Closure which will receive a DimensionBuilder which allows you to select a dimension in a more advance way. |

| string/Closure | Required | $dimensionB |

"first_name" | The dimension which you want to compare, or a Closure which will receive a DimensionBuilder which allows you to select a dimension in a more advance way. |

| string | Optional | $boolean |

"and" | This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

orWhereColumn()

Same as whereColumn(), but now we will join previous added filters with a or instead of an and.

whereInterval()

The Interval filter enables range filtering on columns that contain long millisecond values, with the boundaries specified as ISO 8601 time intervals. It is suitable for the __time column, long metric columns, and dimensions with values that can be parsed as long milliseconds.

This filter converts the ISO 8601 intervals to long millisecond start/end ranges. It will then use a between filter to see if the interval matches.

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

__time | The dimension which you want to filter |

| array | Required | $intervals |

['yesterday/now'] | See below for more info |

| string | Optional | $boolean |

"and" / "or" | This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

The $intervals array can contain the following:

- an

Intervalobject - a raw interval string as used in druid. For example: "2019-04-15T08:00:00.000Z/2019-04-15T09:00:00.000Z"

- an interval string, separating the start and the stop with a / (for example "12-02-2019/13-02-2019")

- an array which contains 2 elements, a start and stop date. These can be an DateTime object, a unix timestamp or anything which can be parsed by DateTime::__construct

See for more info also the interval() method.

Example:

orWhereInterval()

Same as whereInterval(), but now we will join previous added filters with a or instead of an and.

whereFlags()

This filter allows you to filter on a dimension where the value should match against your filter using a bitwise AND comparison.

Support for 64-bit integers are supported.

Druid has support for bitwise flags since version 0.20.2. Before that, we have built our own variant, but then

javascript support is required. To make use of the javascript variant, you should pass true as the 4th parameter

of this method.

JavaScript-based functionality is disabled by default. Please refer to the Druid JavaScript programming guide for guidelines about using Druid's JavaScript functionality, including instructions on how to enable it: https://druid.apache.org/docs/latest/development/javascript.html

Example:

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

"flags" | The dimension where you want to filter on |

| int | Required | $flags |

64 | The flags which should match in the given dimension (comparing with a bitwise AND) |

| string | Optional | $boolean |

"and" | This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

| boolean | Optional | $useJavascript |

true | Older versions do not yet support the bitwiseAnd expression. Set this parameter to true to use an javascript alternative instead. |

orWhereFlags()

Same as whereFlags(), but now we will join previous added filters with a or instead of an and.

whereExpression()

This filter allows you to filter on a druid expression. See also: https://druid.apache.org/docs/latest/querying/math-expr

This filter allows for more flexibility, but it might be less performant than a combination of the other filters on this page due to the fact that not all filter optimizations are in place yet.

Example:

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $expression |

"((product_type == 42) && (!is_deleted))" |

The expression to use for your filter. |

| string | Optional | $boolean |

"and" |

This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

orWhereExpression()

Same as whereExpression(), but now we will join previous added filters with a or instead of an and.

whereSpatialRectangular()

This filter allows you to filter on your records where your spatial dimension is within the given rectangular shape.

Example:

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

"location" |

The expression to use for your filter. |

| array | Required | $minCoords |

[0.350189, 51.248163] |

List of minimum dimension coordinates for coordinates [x, y, z] |

| array | Required | $maxCoords |

[-0.613861, 51.248163] |

List of maximum dimension coordinates for coordinates [x, y, z] |

| string | Optional | $boolean |

"and" | This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

orWhereSpatialRectangular()

Same as whereSpatialRectangular(), but now we will join previous added filters with a or instead of an and.

whereSpatialRadius()

This filter allows you to filter on your records where your spatial dimension is within radios of a given point.

Example:

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

"location" |

The expression to use for your filter. |

| array | Required | $minCoords |

[0.350189, 51.248163] |

List of minimum dimension coordinates for coordinates [x, y, z] |

| array | Required | $maxCoords |

[-0.613861, 51.248163] |

List of maximum dimension coordinates for coordinates [x, y, z] |

| string | Optional | $boolean |

"and" |

This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

orWhereSpatialRadius()

Same as whereSpatialRadius(), but now we will join previous added filters with a or instead of an and.

whereSpatialPolygon()

This filter allows you to filter on your records where your spatial dimension is within a given polygon.

Example:

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $dimension |

"location" |

The expression to use for your filter. |

| array | Required | $abscissa |

[0.350189, 51.248163] |

(The x axis) Horizontal coordinate for corners of the polygon |

| array | Required | $ordinate |

[-0.613861, 51.248163] |

(The y axis) Vertical coordinate for corners of the polygon |

| string | Optional | $boolean |

"and" |

This influences how this filter will be joined with previous added filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

orWhereSpatialPolygon()

Same as orWhereSpatialPolygon(), but now we will join previous added filters with a or instead of an and.

QueryBuilder: Having Filters

With having filters, you can filter out records after the data has been retrieved. This allows you to filter on aggregated values.

See also this page: https://druid.apache.org/docs/latest/querying/having.html

Below are all the having methods explained.

having()

The having() filter is very similar to the where() filter. It is very flexible.

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $having |

"totalClicks" | The metric which you want to filter. |

| string | Required | $operator |

">" | The operator which you want to use to filter. See below for a complete list of supported operators. |

| string/int | Required | $value |

50 | The value which you want to use in your filter comparison |

| string | Optional | $boolean |

"and" / "or" | This influences how this having-filter will be joined with previous added having-filters. Should both filters apply ("and") or one or the other ("or") ? Default is "and". |

The following $operator values are supported:

| Operator | Description |

|---|---|

| = | Check if the metric is equal to the given value. |

| != | Check if the metric is not equal to the given value. |

| <> | Same as != |

| > | Check if the metric is greater than the given value. |

| >= | Check if the metric is greater than or equal to the given value. |

| < | Check if the metric is less than the given value. |

| <= | Check if the metric is less than or equal to the given value. |

| like | Check if the metric matches a SQL LIKE expression. Special characters supported are "%" (matches any number of characters) and "_" (matches any one character). |

| not like | Same as like, only now the metric should not match. |

This method supports a quick equals shorthand. Example:

Is the same as

We also support using a Closure to group various havings in 1 filter. It will receive a HavingBuilder. For example:

This would be the same as an SQL equivalent:

As last, you can also supply a raw filter or having-filter object. For example:

However, this is not recommended and should not be needed.

orHaving()

Same as having(), but now we will join previous added having-filters with a or instead of an and.

QueryBuilder: Virtual Columns

Virtual columns allow you to create a new "virtual" column based on an expression. This is very powerful, but not well documented in the Druid Manual.

Druid expressions allow you to do various actions, like:

- Execute a lookup and use the result

- Execute mathematical operations on values

- Use if, else expressions

- Concat strings

- Use a "case" statement

- Etc.

For the full list of available expressions, see this page: https://druid.apache.org/docs/latest/querying/math-expr

To use a virtual column, you should use the virtualColumn() method:

virtualColumn()

This method creates a virtual column based on the given expression.

Virtual columns are queryable column "views" created from a set of columns during a query.

A virtual column can potentially draw from multiple underlying columns, although a virtual column always presents itself as a single column.

Virtual columns can be used as dimensions or as inputs to aggregators.

NOTE: virtual columns are NOT automatically added to your output. You should select it separately if you want to

add it also to your output. Use selectVirtual() to do both at once.

Example:

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $expression |

if( dimension > 0, 2, 1) | The expression which you want to use to create this virtual column. |

| string | Required | $as |

"myVirtualColumn" | The name of the virtual column created. You can use this name in a dimension (select it) or in an aggregation function. |

| string/DataType | Optional | $type |

DataType::STRING | The output type of this virtual column. Possible values are: string, float, long and double. Default is string. See also the DataType enum. |

selectVirtual()

This method creates a virtual column as the method virtualColumn() does, but this method also selects the virtual

column in the output.

Example:

This method has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $expression |

if( dimension > 0, 2, 1) | The expression which you want to use to create this virtual column. |

| string | Required | $as |

"myVirtualColumn" | The name of the virtual column created. You can use this name in a dimension (select it) or in an aggregation function. |

| string/DataType | Optional | $type |

DataType::STRING | The output type of this virtual column. Possible values are: string, float, long and double. Default is string. See also the DataType enum. |

QueryBuilder: Post Aggregations

Post aggregations are aggregations which are executed after the result is fetched from the druid database.

fieldAccess()

The fieldAccess() post aggregator method is not really an aggregation method itself, but you need it to access fields

which are used

in the other post aggregations.

For example, when you want to calculate the average salary per job function:

However, you can also use this shorthand, which will be converted to fieldAccess methods:

This is exactly the same. We will convert the given fields to fieldAccess() for you.

The fieldAccess() post aggregator has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $aggregatorOutputName |

totalRevenue | This refers to the output name of the aggregator given in the aggregations portion of the query |

| string | Required | $as |

myField | The output name as how we can access it |

| string | Optional | $finalizing |

false | Set this to true if you want to return a finalized value, such as an estimated cardinality |

constant()

The constant() post aggregator method allows you to define a constant which can be used in a post aggregation

function.

For example, when you want to calculate the area of a circle based on the radius, you can use a formula like below:

Find the circle area based on the formula (radius x radius x pi).

The constant() post aggregator has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| int/float | Required | $numericValue |

3.14 | This will be our static value |

| string | Required | $as |

pi | The output name as how we can access it |

expression()

The expression() post aggregator method allows you to supply a Native Druid expression which allows you to compute a

result value.

Druid expressions allow you to do various actions, like:

- Execute a lookup and use the result

- Execute mathematical operations on values

- Use if, else statements

- Concat strings

- Use a "case" statement

- Etc.

For the full list of available expressions, see this page: https://druid.apache.org/docs/latest/querying/math-expr

Example:

The expression() post aggregator has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $as |

pi | The output name as how we can access it. |

| string | Required | $expression |

field1 + field2 | The expression which you want to compute. |

| string | Optional | $ordering |

"numericFirst" | If no ordering (or null) is specified, the "natural" ordering is used. numericFirst ordering always returns finite values first, followed by NaN, and infinite values last. If the expression produces array or complex types, specify ordering as null and use outputType instead to use the correct type native ordering. |

| DataType/string | Optional | $outputType |

DOUBLE | Output type is optional, and can be any native Druid type. Use a string value for ARRAY types (e.g. ARRAY<LONG>), or COMPLEX types (e.g. COMPLEX<json>). If not specified, the output type will be inferred from the expression. If specified and ordering is null, the type native ordering will be used for sorting values. If the expression produces array or complex types, this value must be non-null to ensure the correct ordering is used. If outputType does not match the actual output type of the expression, the value will be attempted to coerced to the specified type, possibly failing if coercion is not possible. |

divide()

The divide() post aggregator method divides the given fields. If a value is divided by 0, the result will always be 0.

Example:

The first parameter is the name as the result will be available in the output. The fields which you want to divide can be supplied in various ways. These ways are described below:

Method 1: array

You can supply the fields which you want to use in your division as an array. They will be converted to fieldAccess()

calls for you.

Example:

Method 2: Variable-length argument lists

You can supply the fields which you want to use in your division as extra arguments in the method call.

They will be converted to fieldAccess() calls for you.

Example:

Method 3: Closure

You can also supply a closure, which allows you to build more advance math calculations.

Example:

The divide() post aggregator has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $as |

pi | The output name as how we can access it |

| array/Closure/...string | Required | $fieldOrClosure |

['totalSalary', 'nrOfEmployees'] | The fields which you want to divide. See above for more information. |

multiply()

The multiply() post aggregator method multiply the given fields.

Example:

The multiply() post aggregator has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $as |

pi | The output name as how we can access it |

| array/Closure/...string | Required | $fieldOrClosure |

['totalSalary', 'nrOfEmployees'] | The fields which you want to multiply. See the divide() method for more info. |

subtract()

The subtract() post aggregator method subtract the given fields.

Example:

The subtract() post aggregator has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $as |

pi | The output name as how we can access it |

| array/Closure/...string | Required | $fieldOrClosure |

['totalSalary', 'nrOfEmployees'] | The fields which you want to subtract. See the divide() method for more info. |

add()

The add() post aggregator method add the given fields.

Example:

The add() post aggregator has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $as |

pi | The output name as how we can access it |

| array/Closure/...string | Required | $fieldOrClosure |

['totalSalary', 'nrOfEmployees'] | The fields which you want to add. See the divide() method for more info. |

quotient()

The quotient() post aggregator method will calculate the quotient over the given field values. The quotient division

behaves like regular floating point division.

Example:

The add() post aggregator has the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $as |

pi | The output name as how we can access it |

| array/Closure/...string | Required | $fieldOrClosure |

['totalSalary', 'nrOfEmployees'] | The fields which you want to quotient. See the divide() method for more info. |

longGreatest() and doubleGreatest()

The longGreatest() and doubleGreatest() post aggregation methods computes the maximum of all fields.

The difference between the doubleMax() aggregator and the doubleGreatest() post-aggregator is that doubleMax returns

the highest value of all rows for one specific column while doubleGreatest returns the highest value of multiple columns

in one row. These are similar to the SQL MAX and GREATEST functions.

Example:

The longGreatest() and doubleGreatest() post aggregator have the following arguments:

| Type | Optional/Required | Argument | Example | Description |

|---|---|---|---|---|

| string | Required | $as |