Download the PHP package ccmbenchmark/bigquery-bundle without Composer

On this page you can find all versions of the php package ccmbenchmark/bigquery-bundle. It is possible to download/install these versions without Composer. Possible dependencies are resolved automatically.

Download ccmbenchmark/bigquery-bundle

More information about ccmbenchmark/bigquery-bundle

Files in ccmbenchmark/bigquery-bundle

Package bigquery-bundle

Short Description Batch upload data to Google bigquery with Symfony

License MIT

Informations about the package bigquery-bundle

BigQuery Bundle

This bundle offers a simple method to batch upload data to Google bigquery.

Concept

3 concepts are useful to work with this bundle:

Entities

An entity is any object implementing \CCMBenchmark\BigQueryBundle\BigQuery\Entity\RowInterface.

This interface extends JsonSerializable to handle the export to bigquery.

An entity is coupled to a metadata object.

Metadata

Metadata are responsible to store the schema related to your entity and other information used to store data into bigquery.

A metadata is a class implementing \CCMBenchmark\BigQueryBundle\BigQuery\MetadataInterface.

UnitOfWork

The UnitOfWork is provided by the bundle. It's responsible to store the data and then to upload it to bigquery.

Full example

Getting started

Install this package

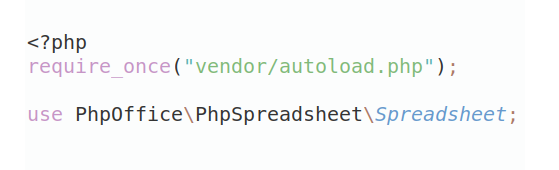

- Require the package

composer require ccmbenchmark/bigquery-bundle - Add it to your kernel:

Symfony 4+:

Symfony 3.4:

Setup your project on google cloud storage and google bigquery

To upload data to google big query using google cloud storage, you need:

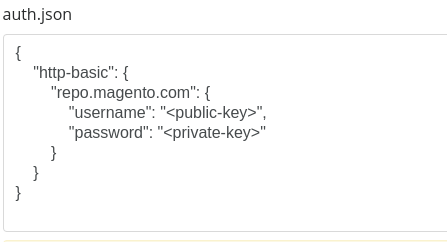

- A valid google cloud project with the following apis: BigQuery API, Cloud Storage

- A valid service account with the json api identifier

- To setup the billing on your account

Note: Usage of google cloud storage and google big query can be charged by Google. So using this bundle can produce charges on your account. You are responsible of that.

Setup the bundle

Create and declare your metadata

To create a metadata, create a new class implementing MetadataInterface.

To automatically register your metadata into the UnitOfWork, this bundle provides a tag to declare on this service.

At this point your metadata are declared into the UnitOfWork, thanks to a CompilerPass. You are ready to upload data.

Working with UnitOfWork service

You need to use the service CCMBenchmark\BigQueryBundle\BigQuery\UnitOfWork.

This service offers a simple API.

Upload data to google bigquery

Call addData to store a new Entity to upload.

When all you're entities are in the UnitOfWork, call flush to upload it.

Request data from google bigquery

Call requestData to make a request to the specified projectId it will return a \Google\Service\Bigquery\GetQueryResultsResponse

Debugging

If there is no exception thrown by the code but you cannot find your data in bigquery, you should follow this steps:

- Check google cloud storage. A file named "reporting-[YYYY-mm-dd]-[uniqId].json" should be here. If there is no such file, check permissions on GCP.

- Check google big query. A new job should have been submitted. Be sure to check in the project history. If the job is errored, try to submit it again using the UI, bigquery will display the errors.

Cleaning google cloud storage

This is out of scope of this bundle, but to save storage you can define a lifecycle in your bucket.

All versions of bigquery-bundle with dependencies

ext-json Version *

ext-curl Version *

google/cloud-bigquery Version ^1.23

google/cloud-storage Version ^1.25

google/apiclient Version ^2.10

symfony/dependency-injection Version ^4.1.12|^5.0|^6.0

symfony/config Version ^4.2|^5.0|^6.0

symfony/http-kernel Version ^4.0|^5.0|^6.0

guzzlehttp/guzzle Version ^5.3|^6.0|^7.0