Download the PHP package harm-smits/webcrawler-verifier without Composer

On this page you can find all versions of the php package harm-smits/webcrawler-verifier. It is possible to download/install these versions without Composer. Possible dependencies are resolved automatically.

Download harm-smits/webcrawler-verifier

More information about harm-smits/webcrawler-verifier

Files in harm-smits/webcrawler-verifier

Package webcrawler-verifier

Short Description PHP library providing functionality to verify that user-agents are who they claim to be.

License MIT

Informations about the package webcrawler-verifier

webcrawler-verifier

Webcralwer-Verifier is a PHP library to ensure that robots are from the operator they claim to be, eg that Googlebot is actually coming from Google and not from some spoofer.

Installation

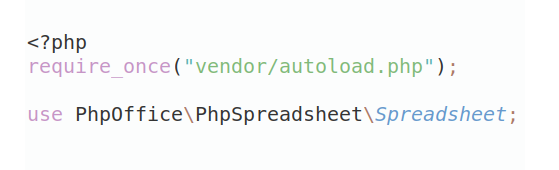

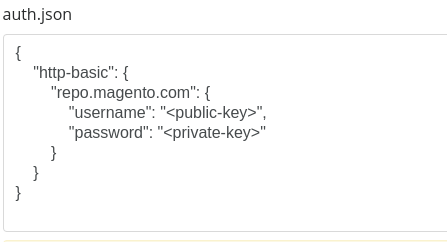

Install with Composer

If you're using Composer to manage dependencies, you can add Requests with it.

or

Usage

Or

Built in crawler detection

By company

- Apple Inc.

- Baidu

- Begun.

- Dassault Systèmes

- Michael Schöbel

- Google Inc.

- Grapeshot Limited

- IBM Germany Research & Development GmbH

- Kitsuregawa Laboratory, The University of Tokyo External link

- LinkedIn Inc.

- Mail.Ru Group

- Microsoft Corporation

- Mojeek Ltd.

- Odnoklassniki LLC

- OJSC Rostelecom

- Seznam.cz, a.s.

- Turnitin, LLC

- Twitter, Inc.

- W3C

- Wotbox Team

- Yahoo! Inc.

- Yandex LLC

By webcrawler name

- Coming soon

Contributions are welcome.

How it works

Step one is identification.

If the user-agent identifies as one of the bots you are checking for, it goes into step 2 for verification. If not, none is reported.

Step two is verification.

The robot that was reported in the user-agent is verified by looking at the client's network address. The big ones work with a combination of dns + reverse-dns lookup. That's not a hack, it's the officially recommended way. The ip resolves to a hostname of the provider, and the hostname has a reverse dns entry pointing back to that ip. This gives the crawler operators the freedom to to change and add networks without risking of being locked out of websites.

The other method is to maintain lists of ip addresses. This is used for those operators that don't officially endorse the first method. And it can optionally be used in combination with the first method to avoid the one-time cost of the dns verification.

Except where it's required (for the 2nd method) this project does not maintain ip lists. The ones that can currently be found on the internet all seem outdated. And that's exactly the problem... they will always be lagging behind the ip ranges that the operators use.

Contribution

Don't hesitate to create a pull request. Every contribution is appreciated.