Download the PHP package gverschuur/laravel-robotstxt without Composer

On this page you can find all versions of the php package gverschuur/laravel-robotstxt. It is possible to download/install these versions without Composer. Possible dependencies are resolved automatically.

Download gverschuur/laravel-robotstxt

More information about gverschuur/laravel-robotstxt

Files in gverschuur/laravel-robotstxt

Package laravel-robotstxt

Short Description Set the robots.txt content dynamically based on the Laravel app environment.

License MIT

Informations about the package laravel-robotstxt

Dynamic robots.txt ServiceProvider for Laravel 🤖

- Installation

- Composer

- Manual

- Service provider registration

- Usage

- Basic usage

- Custom settings

- Examples

- Allow directive

- Sitemaps

- The standard production configuration

- Adding multiple sitemaps

- Compatiblility

- Testing

- robots.txt reference

Installation

Composer

Manual

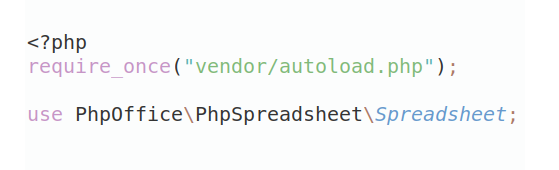

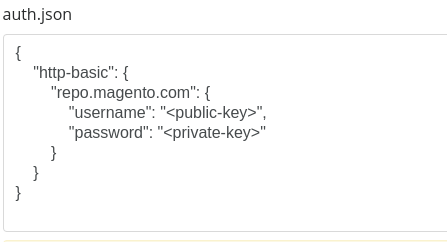

Add the following to your composer.json and then run composer install.

Service provider registration

This package supports Laravel's service provider autodiscovery so that's it. If you wish to register the package manually, add the ServiceProvider to the providers array in config/app.php.

Usage

Basic usage

This package adds a /robots.txt route to your application. Remember to remove the physical robots.txt file from your /public dir or else it will take precedence over Laravel's route and this package will not work.

By default, the production environment will show

while every other environment will show

This will allow the default install to allow all robots on a production environment, while disallowing robots on every other environment.

Custom settings

If you need custom sitemap entries, publish the configuration file

This will copy the robots-txt.php config file to your app's config folder. In this file you will find the following array structure

In which:

{environment name}: the enviroment for which to define the paths.{robot name}: the robot for which to define the paths.disallow: all entries which will be used by thedisallowdirective.allow: all entries which will be used by theallowdirective.

By default, the environment name is set to production with a robot name of * and a disallow entry consisting of an empty string. This will allow all bots to access all paths on the production environment.

Note: If you do not define any environments in this configuration file (i.e. an empty configuration), the default will always be to disallow all bots for all paths.

Examples

For brevity, the environment array key will be disregarded in these examples.

Allow all paths for all robots on production, and disallow all paths for every robot in staging.

Allow all paths for all robot bender on production, but disallow /admin and /images on production for robot flexo

Allow directive

Besides the more standard disallow directive, the allow directive is also supported.

Allow a path, but disallow sub paths:

When the file is rendered, the disallow directives will always be placed before the allow directives.

If you don't need one or the other directive, and you wish to keep the configuration file clean, you can simply remove the entire key from the entire array.

Sitemaps

This package also allows to add sitemaps to the robots file. By default, the production environment will add a sitemap.xml entry to the file. You can remove this default entry from the sitemaps array if you don't need it.

Because sitemaps always need to an absolute url, they are automatically wrapped using Laravel's url() helper function. The sitemap entries in the config file should be relative to the webroot.

The standard production configuration

Adding multiple sitemaps

Compatiblility

This package is compatible with Laravel 9, 10, 11 and 12. For a complete overview of supported Laravel and PHP versions, please refer to the 'Run test' workflow.

Testing

PHPUnit test cases are provided in /tests. Run the tests through composer run test or vendor/bin/phpunit --configuration phpunit.xml.

robots.txt reference

The following reference was while creating this package: