Download the PHP package awanesia/scrap without Composer

On this page you can find all versions of the php package awanesia/scrap. It is possible to download/install these versions without Composer. Possible dependencies are resolved automatically.

Download awanesia/scrap

More information about awanesia/scrap

Files in awanesia/scrap

Package scrap

Short Description A web scraper for PHP to easily extract data from web pages -> party of laurentvw

License MIT

Homepage http://github.com/awanesia/scrap

Informations about the package scrap

Scrapher

Scrapher is a PHP library to easily scrape data from web pages.

Getting Started

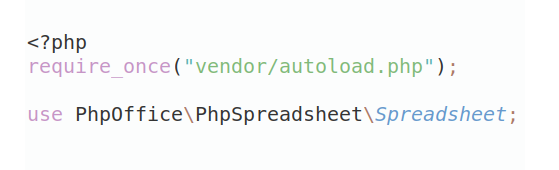

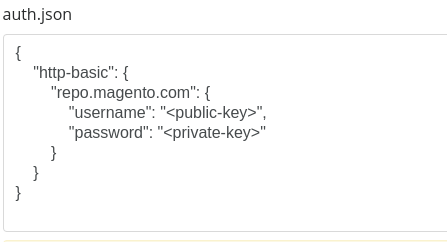

Installation

Add the package to your composer.json and run composer update.

{

"require": {

"laurentvw/scrapher": "2.*"

}

}For the people still using v1.0 ("LavaCrawler"), you can find the documentation is here: https://github.com/Laurentvw/scrapher/tree/v1.0.2

Basic Usage

In order to start scraping, you need to set the URL(s) or HTML to scrape, and a type of selector to use (for example a regex selector, together with the data you wish to match).

This returns a list of arrays based on the match configuration that was set.

array(29) {

[0] =>

array(2) {

'url' =>

string(34) "https://www.google.com/webhp?tab=ww"

'title' =>

string(6) "Search"

}

...

}Documentation

Instantiating

When creating an instance of Scrapher, you may optionally pass one or more URLs.

Passing multiple URLs can be useful when you want to scrape the same data on different pages. For example when content is separated by pagination.

If you prefer to fetch the page yourself using a dedicated client/library, you may also simply pass the actual content of a page. This can also be handy if you want to scrape other content besides just web pages (e.g. local files).

In some cases, you may want to add (read: append) URLs or contents on the fly.

Matching data using a Selector

Before retrieving or sorting the matched data, you need to choose a selector to match the data you want.

At the moment, Scrapher offers 1 selector out of the box, RegexSelector, which let's you select data using regular expressions.

A Selector takes an expression and a match configuration as its arguments.

For example, to match all links and their link name, you could do:

Note that the kind of value passed to the "id" key may vary depending on what selector you're using, and can virtually be anything. You can think of the "id" key as the glue between the given expression and its selector.

RegexSelector uses http://php.net/manual/en/function.preg-match-all.php under the hood.

For your convenience, when using Regex, a match with 'id' => 0 will return the URL of the crawled page.

Retrieving & Sorting

Once you've specified a selector using the with method, you can start retrieving and/or sorting the data.

Retrieving

Offset & limit

Sorting

- See date_create

Filtering

You can filter the matched data to refine your result set. Return true to keep the match, false to filter it out.

Mutating

In order to handle inconsistencies or formatting issues, you can alter the matched values to a more desirable value. Altering happens before filtering and sorting the result set. You can do so by using the apply index in the match configuration array with a closure that takes 2 arguments: the matched value and the URL of the crawled page.

Validation

You may validate the matched data to insure that the result set always contains the desired result. Validation happens after optionally mutating the data set with apply. To add the validation rules that should be applied to the data, use the validate index in the match configuration array with a closure that takes 2 arguments: the matched value and the URL of the crawled page. The closure should return true if the validation succeeded, and false if the validation failed. Matches that fail the validation will be removed from the result set.

- To make validation easier, we recommend using https://github.com/Respect/Validation in your project.

Logging

If you wish to see the matches that were filtered out, or removed due to failed validation, you can use the getLogs method, which returns an array of message logs.

Did you know?

All methods are chainable

Only the methods get, first, last, count and getLogs will cause the chaining to end, as they all return a certain result.

You can scrape different data from one page

Suppose you're scraping a page, and you want to get all H2 titles, as well as all links on the page. You can do so without having to re-instantiate Scrapher.

About

Author

Laurent Van Winckel - http://www.laurentvw.com - http://twitter.com/Laurentvw

License

Scrapher is licensed under the MIT License - see the LICENSE file for details

Contributing

Contributions to Laurentvw\Scrapher are always welcome. You make our lives easier by sending us your contributions through GitHub pull requests.

You may also create an issue to report bugs or request new features.