Download the PHP package proxycrawl/proxycrawl without Composer

On this page you can find all versions of the php package proxycrawl/proxycrawl. It is possible to download/install these versions without Composer. Possible dependencies are resolved automatically.

Download proxycrawl/proxycrawl

More information about proxycrawl/proxycrawl

Files in proxycrawl/proxycrawl

Package proxycrawl

Short Description A lightweight, dependency free PHP class that acts as wrapper for ProxyCrawl API

License Apache-2.0

Homepage https://github.com/proxycrawl/proxycrawl-php

Informations about the package proxycrawl

DEPRECATION NOTICE

:warning: IMPORTANT: This package is no longer maintained or supported. For the latest updates, please use our new package at crawlbase-php.

ProxyCrawl API PHP class

A lightweight, dependency free PHP class that acts as wrapper for ProxyCrawl API.

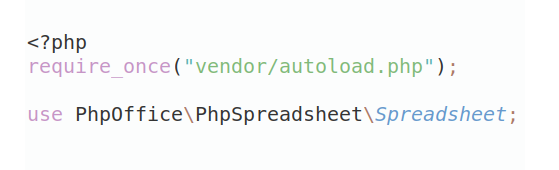

Installing

Choose a way of installing:

- Use Packagist PHP package manager.

- Download the project from Github and save it into your project so you can require it

require_once('proxycrawl-php/src/[class].php')

Crawling API

First initialize the CrawlingAPI class. You can get your free token here.

GET requests

Pass the url that you want to scrape plus any options from the ones available in the API documentation.

Example:

You can pass any options from ProxyCrawl API.

Example:

Optionally pass store parameter to true to store a copy of the API response in the ProxyCrawl Cloud Storage.

Example:

POST requests

Pass the url that you want to scrape, the data that you want to send which can be either a json or a string, plus any options from the ones available in the API documentation.

Example:

You can send the data as application/json instead of x-www-form-urlencoded by setting option post_content_type as json.

PUT requests

Pass the url that you want to scrape, the data that you want to send which can be either a json or a string, plus any options from the ones available in the API documentation.

Example:

Javascript requests

If you need to scrape any website built with Javascript like React, Angular, Vue, etc. You just need to pass your javascript token and use the same calls. Note that only ->get is available for javascript and not ->post.

Same way you can pass javascript additional options.

Original status

You can always get the original status and proxycrawl status from the response. Read the ProxyCrawl documentation to learn more about those status.

Scraper API

First initialize the ScraperAPI class. You can get your free token here. Please note that only some websites are supported, check the API documentation for more information.

Pass the url that you want to scrape plus any options from the ones available in the API documentation.

Example:

Leads API

First initialize the LeadsAPI class. You can get your free token here.

Pass the domain where you want to search for leads.

Example:

Screenshots API usage

Initialize with your Screenshots API token and call the get method.

or you can specify a callback that automatically saves the file to the temporary folder

or specifying a file path via saveToPath option

Note that $api.get(url, options) method accepts an options

Storage API usage

Initialize the Storage API using your private token.

Pass the url that you want to get from Proxycrawl Storage.

or you can use the RID

Note: One of the two RID or URL must be sent. So both are optional but it's mandatory to send one of the two.

Delete request

To delete a storage item from your storage area, use the correct RID

Bulk request

To do a bulk request with a list of RIDs, please send the list of rids as an array

RIDs request

To request a bulk list of RIDs from your storage area

You can also specify a limit as a parameter

Total Count

To get the total number of documents in your storage area

If you have questions or need help using the library, please open an issue or contact us.

Copyright 2023 ProxyCrawl